In precision manufacturing, apparent stability can hide emerging risks in tolerance control, material behavior, and system performance. When quality data looks consistent but underlying variation goes unnoticed, manufacturers, distributors, and researchers face costly failures and strategic misjudgments. This article explores why stable metrics do not always mean reliable output, and how deeper technical intelligence can reveal the true condition of precision components and motion systems.

Why “stable quality” is becoming a more dangerous signal in precision manufacturing

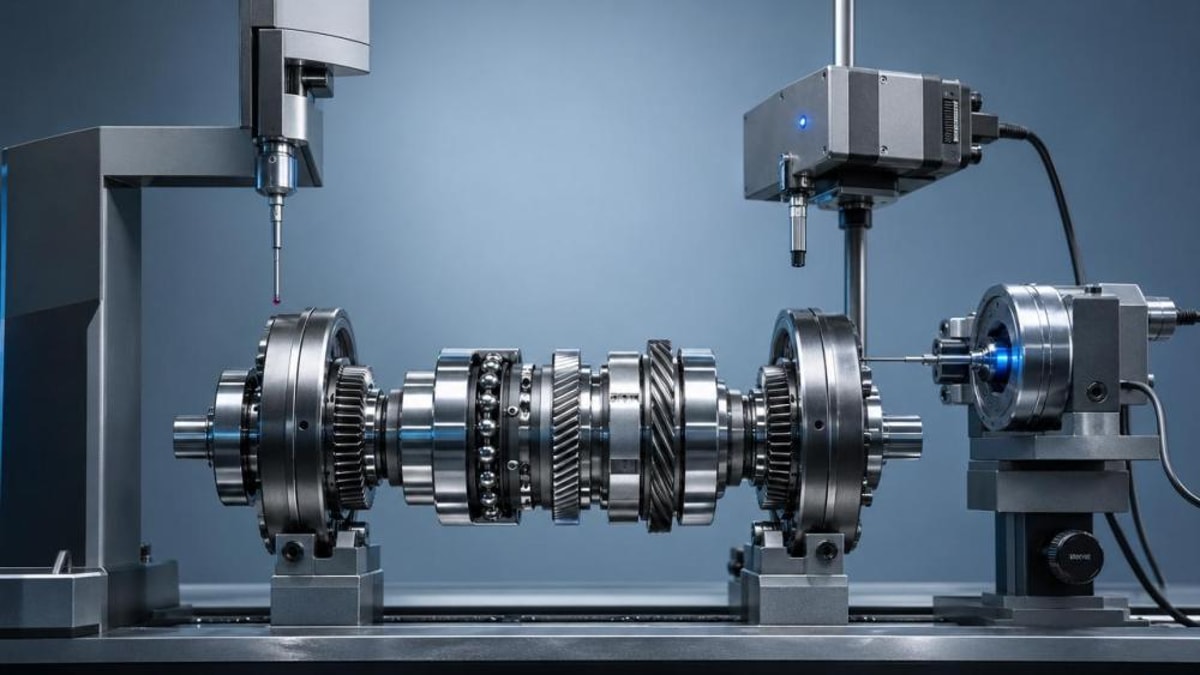

Across global supply chains, precision manufacturing is entering a phase where visible consistency is no longer enough. Parts may pass incoming inspection, process capability may appear acceptable, and field complaints may remain low for months. Yet hidden instability can still build beneath the surface. This is especially true in sectors linked to bearings, transmission assemblies, hydraulic components, sealing interfaces, and tightly matched motion systems.

The reason this matters now is that production environments have changed. Product platforms are more modular, material substitutions happen faster, energy and lubricant conditions vary more widely, and international sourcing introduces subtle shifts in heat treatment, roughness, and dimensional stack-up. In such an environment, traditional quality dashboards often capture the average condition while missing the edges where failure starts.

For information researchers and decision support teams, this creates a new challenge. The central question is no longer whether a supplier can show stable data, but whether that stability reflects true process robustness. In precision manufacturing, a stable-looking report may hide drift in tool wear, microstructural inconsistency, assembly interaction, or operating load sensitivity. These are small variations with large consequences.

Key market signals behind this shift

- Higher demand for long-life, low-maintenance components in automated equipment

- More aggressive cost control leading to tighter process windows and material substitutions

- Greater reliance on cross-border sourcing, where nominal compliance may mask performance differences

- Broader use of data dashboards that favor visible indicators over deep failure mechanisms

Why average performance is no longer enough

In many precision manufacturing environments, averages look healthy until application conditions change. A shaft and bearing set may perform well under test-bench conditions but behave differently under thermal cycling, contamination, pulsating loads, or intermittent lubrication. Average dimensional conformance does not guarantee system-level stability when real operating conditions amplify small deviations.

This is why quality stability must now be interpreted as a layered issue: process stability, material stability, interface stability, and field stability. If only one layer is monitored, false confidence becomes likely. For companies navigating high-end equipment markets, that false confidence can damage both commercial credibility and technical prestige.

The deeper drivers: why hidden variation is rising even when metrics look calm

Several forces are increasing the gap between reported quality and actual reliability in precision manufacturing. The first is complexity compression. More functions are being integrated into fewer components, which means one dimensional deviation can now affect sealing, friction, vibration, and service life at the same time. What once appeared to be an isolated tolerance issue now becomes a system interaction problem.

The second driver is material behavior under changing operating conditions. Precision components made from special steels, engineered polymers, sintered alloys, or coated surfaces may meet specification at delivery but react differently over time. Residual stress, hardness distribution, surface energy, and tribological pairing all influence long-term behavior. These characteristics are often underrepresented in simplified quality reports.

A third driver is measurement strategy itself. Many quality systems still focus heavily on pass/fail dimensions, Cp/Cpk outputs, or sample-based inspection regimes. These are useful, but they do not always detect slow process drift, assembly interaction effects, or variation that only appears under certain loading patterns. As a result, a process can remain statistically acceptable while becoming practically risky.

For intelligence-led organizations, these drivers point to a broader trend: quality in precision manufacturing is moving from a static compliance model to a dynamic behavior model. The winning companies will not just ask whether a part meets tolerance today. They will ask how that part behaves across time, load, interfaces, and supply chain transitions.

Who feels the impact first: suppliers, buyers, distributors, and researchers

The consequences of false stability are not distributed evenly. In precision manufacturing, different actors encounter different forms of risk depending on where they sit in the value chain. Understanding these impact patterns helps information researchers prioritize which signals deserve closer tracking.

Suppliers are often the first to face margin pressure. They may be asked to maintain output consistency despite material volatility, shorter lead times, and growing customization. If internal process control remains too narrow, they can pass inspection while slowly accumulating warranty exposure. This weakens future negotiation power, especially in premium equipment segments.

Buyers and OEM sourcing teams face a different problem: procurement decisions based on stable-looking documents can hide lifecycle cost inflation. A component with attractive unit pricing may generate higher total cost through rework, downtime, field service, or shortened maintenance intervals. Precision manufacturing decisions therefore increasingly require technical-commercial alignment, not price comparison alone.

Impact by stakeholder group

Why distributors now need technical intelligence, not just product catalogs

In previous market phases, distributors could rely heavily on nominal specifications, brand familiarity, and historical returns data. That is no longer sufficient. Precision manufacturing customers increasingly ask whether the component can sustain specific vibration profiles, contamination levels, maintenance intervals, or efficiency targets. The distributor who cannot interpret those signals risks becoming commercially replaceable.

This is where technical intelligence platforms gain importance. The ability to track material shifts, tribology trends, fluid control requirements, and application-specific failure patterns turns market information into a strategic asset. In precision manufacturing, technical literacy is becoming a channel advantage.

What smart organizations are watching now in precision manufacturing

A notable trend in precision manufacturing is the move from end-of-line inspection to behavior-based monitoring. Rather than treating quality as a final checkpoint, leading organizations track variation across the full chain: raw material condition, machining response, thermal treatment consistency, surface interaction, assembly fit, and field stress. This broader lens does not eliminate uncertainty, but it reveals weak signals earlier.

Another rising focus is the interaction between operating efficiency and durability. Many industrial users now want lower friction, lower energy loss, and longer maintenance intervals at the same time. That combination narrows the margin for hidden variation. A tiny deviation in roundness, coating adhesion, lubrication path, or valve block geometry may have an outsized effect on both efficiency and service life.

Researchers should also pay attention to how quality language is changing. More buyers are asking about performance windows, degradation patterns, and application envelopes rather than simple compliance claims. This indicates a structural shift toward reliability-centered evaluation in precision manufacturing.

Signals worth tracking over the next cycle

- Supplier willingness to share process capability by condition, not only by average output

- Evidence of material lot validation under real application loads

- Changes in complaint timing, especially delayed field issues

- Growth in requests for tribology, fluid dynamics, or fatigue-related technical support

- Increased use of cross-functional reviews linking sourcing, engineering, and reliability teams

The trend toward system-level validation

One of the strongest signals is the movement away from isolated component approval. In precision manufacturing, more companies now recognize that a good part can become a poor system fit when paired with different housings, lubricants, pressure cycles, or thermal regimes. Validation is therefore moving toward assembly-level and use-case-specific analysis.

This trend is especially relevant in power transmission and fluid control applications, where pressure pulsation, contamination, surface finish, and thermal expansion interact continuously. For information researchers, this means reports built only around nominal specification parity will increasingly miss the competitive reality.

How to judge whether stable quality is real or misleading

The most useful response is not alarmism but better judgment. Stable metrics are not inherently wrong; they are simply incomplete when used alone. In precision manufacturing, the goal is to test whether visible consistency is supported by deep process understanding and reliable field behavior.

For analysts, procurement teams, and technical decision-makers, a practical approach is to compare what is measured, what is assumed, and what is exposed to real operating variability. If those three layers are not aligned, the risk of false stability rises sharply. This framework can be applied across mechanical components, motion assemblies, sealing systems, and hydraulic interfaces.

Importantly, this judgment process should be continuous. Precision manufacturing conditions evolve through tooling wear, workforce changes, supply substitutions, and application upgrades. A supplier judged stable last year may now be operating under different constraints. Ongoing review is therefore more valuable than one-time qualification confidence.

A practical evaluation checklist

Action priorities for the next decision cycle

Organizations that depend on precision manufacturing should strengthen three areas first. They should improve cross-functional reading of quality data, expand evaluation from dimensions to behavior, and build intelligence loops that connect supplier signals with field performance. These actions are practical, scalable, and highly relevant in periods of supply chain and technology transition.

If a business wants to understand how this trend affects its own operations, the most important questions are straightforward: Which “stable” indicators are we overtrusting? Where could hidden variation enter our components or assemblies? And do our sourcing and engineering teams share the same definition of reliability? In precision manufacturing, better answers to those questions create stronger technical decisions and more resilient market positioning.

Related News

- When precision manufacturing quality looks stable but is notApr 30, 2026Precision manufacturing may look stable while hidden variation grows. Learn how to spot false quality signals, reduce risk, and make smarter sourcing and reliability decisions.

- Where precision engineering tolerance gaps usually beginApr 30, 2026Precision engineering tolerance gaps often begin before machining—learn the hidden causes across design, materials, suppliers, and application scenarios to reduce risk and improve component reliability.

Related News

- 00

0000-00

Industrial Automation Components Supplier: Key Compliance Checks Before Scaling Orders - 00

0000-00

How to Shortlist a Precision Engineering Supplier for Repeatable Quality - 00

0000-00

Evolutionary Trends in Precision Engineering: What Is Changing in Supplier Capabilities - 00

0000-00

When Precision Manufacturing OEM Makes Sense Over In-House Production - 00

0000-00

Hydraulic Valve Blocks for Precision Engineering: What Affects Service Life Most

Optical Mech Engineer

Strategic Intelligence Center